Is the Micron AI Memory Boom just another chip cycle upswing or the start of a structurally different AI super‑cycle?

Is Micron AI Memory Boom changing the cycle?

The bull case around the Micron AI Memory Boom rests on unprecedented demand for DRAM and NAND as hyperscalers race to deploy AI infrastructure. Micron generates roughly 80% of revenue from DRAM and 20% from NAND, and both markets are in structural undersupply as AI training clusters and inference farms absorb available capacity. High‑bandwidth memory (HBM), which is packaged alongside GPUs and custom AI accelerators from players like NVIDIA, requires about three times the wafer capacity of conventional DRAM, tightening the supply‑demand balance and pushing prices and margins sharply higher.

Micron and its two major DRAM rivals, Samsung and SK Hynix, are concentrating capital on HBM and AI‑optimized products, leaving less incremental supply for commodity DRAM and NAND. That, in turn, has driven a parallel surge in enterprise SSD pricing as data centers scramble for storage to hold massive AI training datasets. Multiple industry forecasts suggest that neither DRAM nor NAND markets are likely to see meaningful relief before late 2027, giving Micron several years of potentially elevated pricing power.

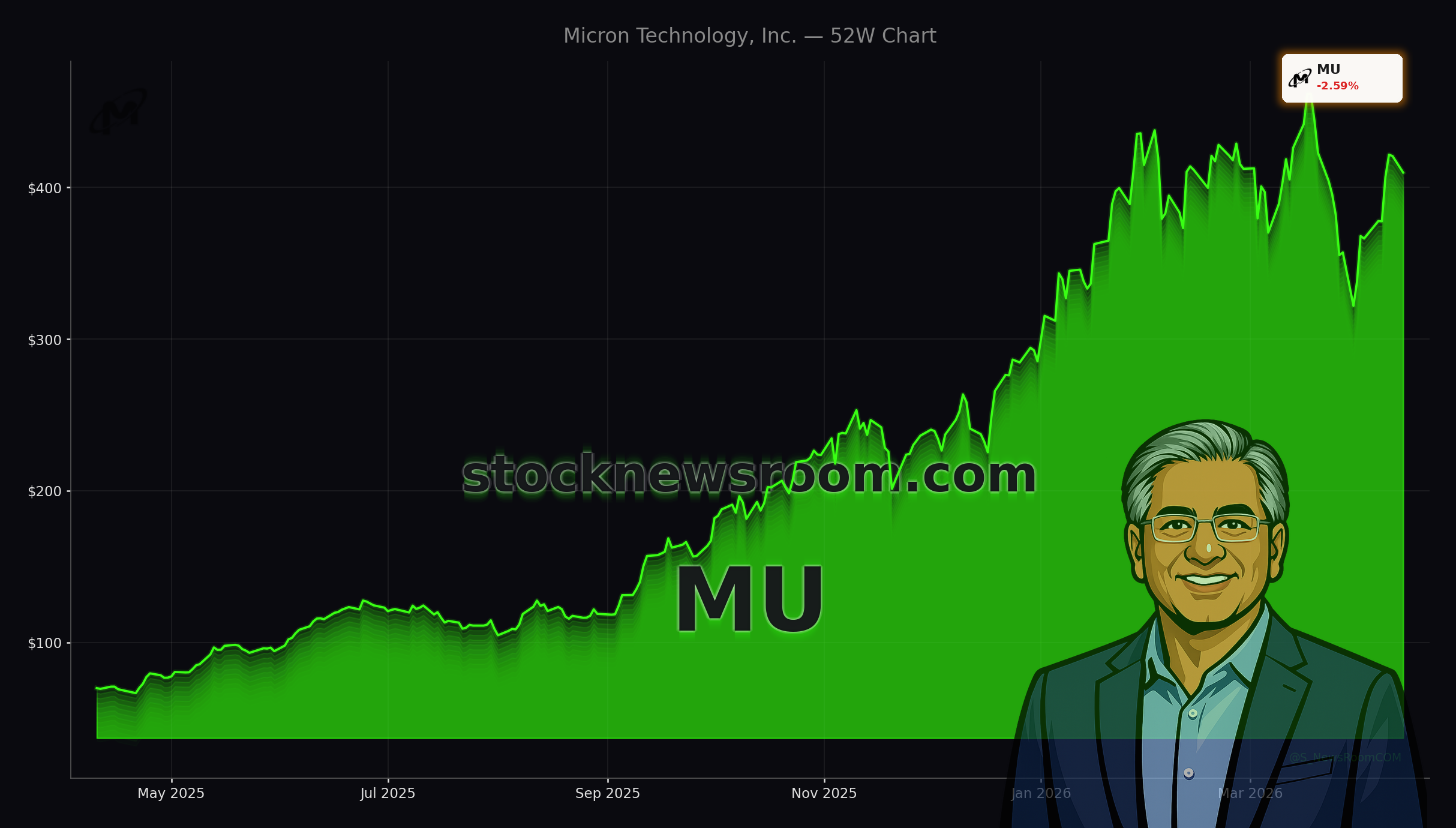

Financially, this backdrop has already translated into surging revenue and much stronger gross margins. One recent industry analysis highlighted that Micron nearly tripled revenue in its latest reported quarter as memory prices reset higher, while projecting further record growth ahead as AI servers become a larger slice of the overall compute market.

How big is Micron’s $100 billion capacity bet?

To lock in its role at the heart of the Micron AI Memory Boom, the company has unveiled a massive long‑term manufacturing expansion, including plans to invest up to $100 billion in New York to build additional memory capacity. This multi‑decade commitment is designed to align with expected AI‑driven demand and leverage U.S. incentives such as the CHIPS Act, reinforcing Micron’s strategic position for domestic supply of advanced DRAM and HBM.

At the same time, Micron is shifting its commercial model. Rather than relying on short‑term, highly cyclical quarterly contracts, the company is signing three‑ to five‑year HBM supply agreements with major cloud providers and AI leaders. Some of these deals reportedly include pricing floors and even upfront capacity payments, improving earnings visibility and dampening the downside in future downturns.

Wall Street analysts have taken notice. KeyBanc Capital Markets analyst John Vinh recently reiterated an Overweight rating and a $600 price target, citing the transition to long‑term agreements, sold‑out HBM capacity through at least 2026, and expectations for continued strength in DRAM and NAND pricing. Another independent forecast pegs Micron’s forward price‑to‑earnings multiple in the high single digits, with upside of more than 60% if current AI demand trends persist.

How does Micron stack up against AI leaders?

The Micron AI Memory Boom is deeply intertwined with the broader AI ecosystem led by NVIDIA, Advanced Micro Devices (AMD) and hyperscale cloud platforms. While those chip designers garner most of the headlines and command premium multiples, memory suppliers like Micron are increasingly viewed as indispensable leverage plays on AI capex. Zacks Investment Research recently highlighted Micron alongside AMD, Hewlett Packard Enterprise and Agilysys as key beneficiaries of integrated AI systems demand, as enterprises adopt multi‑cloud and AI‑centric architectures.

Unlike GPU vendors that face intensifying competition and regulatory scrutiny, Micron operates in a consolidated triopoly in DRAM, with high barriers to entry and enormous capital requirements. For U.S. investors already heavily exposed to AI compute through NVIDIA or CPU/GPU bets via AMD, Micron offers differentiated exposure to the memory and storage layer that every AI model depends on. The same AI build‑out that boosts Micron also fuels demand at ecosystem players such as Apple and Tesla as they roll out more AI‑heavy devices and autonomous features, further entrenching the need for high‑performance memory and storage.

Still, some institutional investors are trimming positions after the stock’s sharp appreciation. KG&L Capital Management, for example, reduced its Micron stake by nearly 20% in late 2025, reflecting concerns about valuation and the typical boom‑bust nature of memory markets, even amid the current Micron AI Memory Boom narrative.

Could classic memory risks derail Micron?

The bear case remains anchored in the industry’s history. DRAM cycles have typically lasted two to three years from peak to trough. With the last trough around early 2023, skeptics argue that Micron may already be approaching a cyclical peak by 2026–2027. In past cycles, periods of tight supply and high prices pushed customers to over‑order and manufacturers to over‑build capacity, eventually triggering painful gluts and price collapses.

NAND has been especially volatile, with a recent crash following pandemic‑era demand pull‑forward for consumer electronics. If AI capex from hyperscalers slows after the current $700 billion spending wave, or if memory‑saving innovations such as more efficient quantization algorithms reduce DRAM requirements per AI workload, Micron’s pricing power and earnings could come under pressure.

For now, though, long‑term HBM contracts, a multi‑year capacity pipeline and supportive analyst views – including forecasts that assign Micron a forward P/E as low as 4x fiscal 2027 earnings – suggest that the market may still be underestimating how transformative the Micron AI Memory Boom could be for the company’s long‑term earnings base.

Related Coverage

Investors looking for a deeper dive into Micron’s valuation and risk profile can explore the detailed scenario analysis in “Micron Technology Forecast: AI Boom or Deep Value Warning”, which weighs whether the current AI‑driven upcycle is sustainable. For a broader sector comparison, “AMD AI Forecast: 60% Data Center Boom vs. Nvidia” examines how AMD’s data center ambitions stack up against its larger rival and what that means for capital flowing into AI infrastructure, a key driver of Micron’s memory demand.

The Micron AI Memory Boom has turned the company into a central pillar of the AI supply chain, combining record pricing power, long‑term contracts and a bold $100 billion U.S. investment plan. For American portfolios already tilted toward GPU and cloud leaders, Micron offers a high‑beta, strategically vital way to participate in the AI infrastructure build‑out. The next several quarters of earnings and capex announcements from hyperscalers will show whether this Micron AI Memory Boom matures into a durable super‑cycle or simply the strongest phase of the industry’s familiar memory cycle.