Are Micron’s record-shattering earnings the start of a lasting AI memory supercycle or just another peak before the next downturn?

How did Micron Earnings stun Wall Street?

The latest Micron Earnings for fiscal Q2 2026 (quarter ended Feb. 26) were nothing short of extraordinary. Revenue surged to about $23.9 billion, up roughly 196% year over year and 75% sequentially, as AI data center demand for DRAM, NAND and especially high‑bandwidth memory (HBM) exploded. Adjusted EPS jumped almost eightfold to $12.20, while gross margin reached a record near 75% and operating margin climbed to roughly 69%, generating around $6.9 billion in free cash flow.

Management expects the momentum to accelerate. For Q3, Micron is guiding revenue in the $32.75 billion to $34.25 billion range, with diluted EPS of $18.75 to $19.55. That implies the company could nearly match its entire fiscal 2025 revenue base in a single quarter if it executes. Analysts on Wall Street have been racing to catch up: Needham recently raised its price target to $500 from $450 with a “Buy” rating, while the broader analyst consensus sits closer to $515–$530, implying material upside from current levels even after the recent pullback.

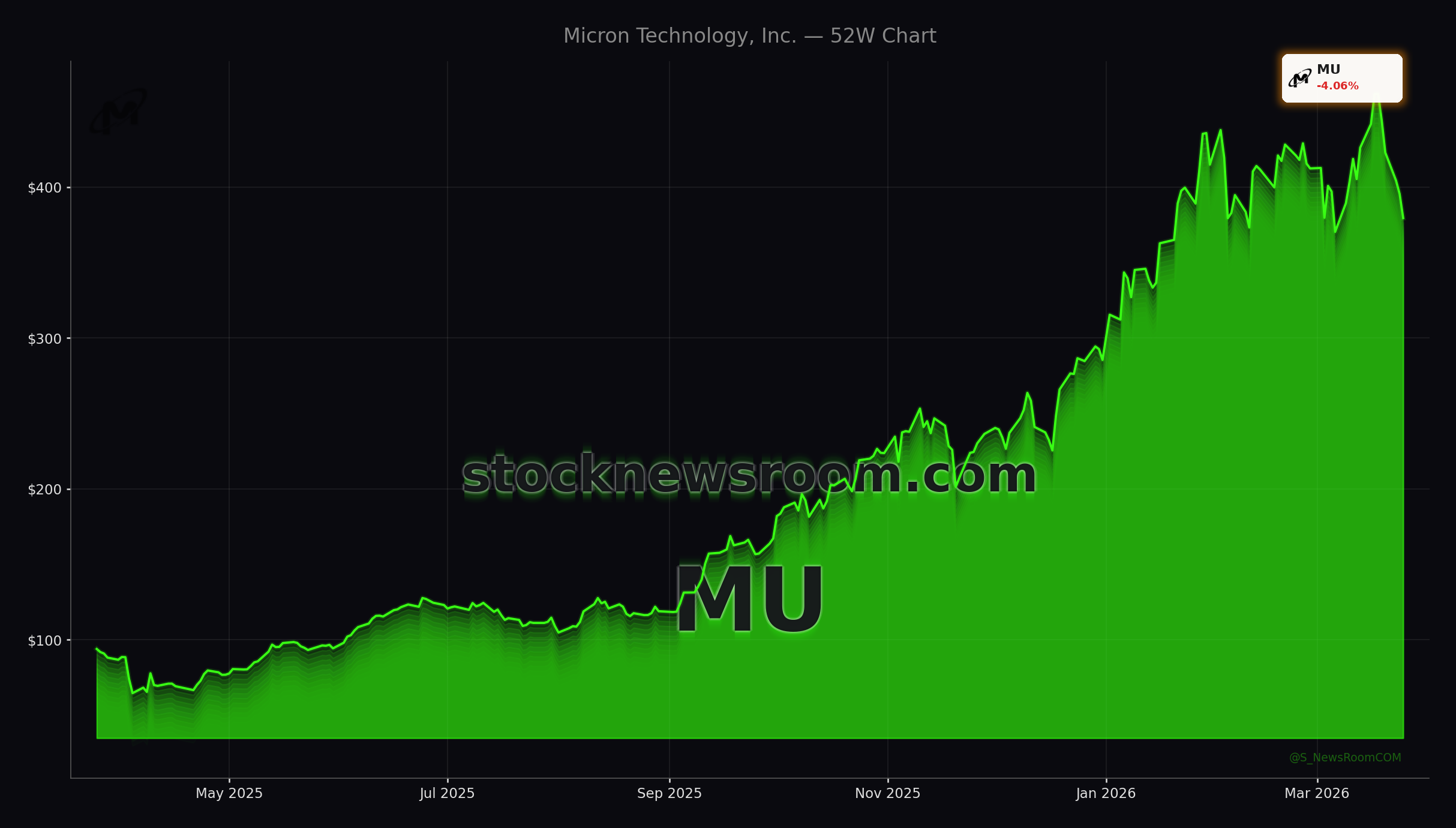

Despite these stellar Micron Earnings, the stock has corrected hard from almost $460 as investors digest just how high the bar has become and whether today’s AI supercycle represents a structural shift in memory or another powerful but ultimately cyclical upswing.

What is driving AI memory demand for Micron?

The core of the bull case is that memory has shifted from a cheap commodity to a strategic asset in AI infrastructure. Training and running large models inside hyperscale data centers now require dramatically higher memory capacity and bandwidth than legacy workloads. Micron expects AI data centers to account for more than half of total DRAM and NAND demand as soon as 2026, and says memory requirements in advanced AI systems have already doubled within a year.

On the product side, Micron is ramping HBM shipments for next‑generation accelerators, including volume for Nvidia’s upcoming Vera Rubin systems, positioning itself alongside NVIDIA and AMD at the heart of the AI stack. HBM supply for 2026 is effectively sold out under multi‑year strategic customer agreements, and the company is already developing HBM4E for further volume increases into 2027 and beyond. Tight cleanroom capacity, slower node‑driven bit growth and HBM consuming a growing share of DRAM wafers mean industry supply remains constrained well past 2026.

That imbalance is showing up in pricing. DRAM prices rose by roughly the mid‑60% range sequentially last quarter, while NAND prices climbed in the high‑70% range. Micron is only able to satisfy about 50% to two‑thirds of customer demand across key segments, including low‑power DRAM, NAND and data center SSDs used in AI inference. Those dynamics help explain why Micron Earnings are scaling so quickly and why investors who focus on the stock’s low forward P/E ratio must remember just how cyclical memory has historically been.

Why did debt tenders and capex rattle investors?

Alongside Micron Earnings, the company announced cash tender offers for six tranches of senior notes totaling up to $5.4 billion, with maturities between 2031 and 2035. The move, detailed in an SEC Form 8‑K and highlighted in a new tender announcement, is designed to proactively manage the balance sheet as cash flows surge, but it has also raised questions about capital allocation priorities at this stage of the cycle.

At the same time, Micron’s capital expenditures jumped to about $5.39 billion in Q1 FY 2026 alone, up 68% year over year, and management has outlined roughly $25 billion in capex for the current fiscal year. That spending supports new fabs and advanced nodes to capture sustained AI demand, but it also pressures near‑term free cash flow and leaves less room for buybacks or dividends. The market is now stress‑testing what happens to Micron Earnings if AI memory demand grows slightly less explosively than management’s current base case.

Competitively, the landscape is also evolving. SK Hynix is preparing a U.S. listing that will give American investors another pure‑play HBM and DRAM name, while Samsung, Arm and even device makers like Apple are investing in custom silicon. For Micron, that means it must continue executing on both technology and capital discipline to justify its premium role in AI portfolios.

Does Google’s TurboQuant cap the upside for Micron?

The biggest structural question emerging around Micron Earnings this week is Google Research’s newly published TurboQuant algorithm. TurboQuant compresses the KV cache used during large language model inference by at least 6x without degrading accuracy, and early benchmarks show up to an 8x performance boost versus 32‑bit implementations on Nvidia H100 GPUs. In theory, that lets AI operators serve more queries with the same HBM and DRAM footprint, challenging the assumption that memory capacity must rise linearly with model size and traffic.

For memory suppliers, including Micron, SK Hynix and Samsung, TurboQuant is a genuine curveball. If broadly adopted, it could slow unit growth for some workloads, especially where cost and energy efficiency trump raw scale. However, history suggests that efficiency gains in semiconductors usually get “soaked up” by more demanding use cases. As models grow, context windows expand and AI moves from data centers into PCs, smartphones, cars and even autonomous systems from companies like Tesla, aggregate memory demand may still rise even as developers become more efficient per inference.

For now, most Wall Street firms, including Needham and others in the bullish camp, continue to see a multi‑year AI memory supercycle, but they are watching closely whether TurboQuant and similar techniques shift the slope of that curve.

Related Coverage

Investors looking for more context on the recent pullback can read Micron Technology Earnings -4.4%: AI Boom Meets Stock Plunge, which dives deeper into how profit‑taking and sentiment swings collided with record results. For a broader view of the AI hardware stack beyond memory, NVIDIA AI Strategy +2.5%: Can the Data Center Surge Last? analyzes whether Nvidia’s data center growth can keep powering the sector and what that implies for key suppliers like Micron.

In summary, Micron Earnings underline how critical AI memory has become, even as debt moves, heavy capex and TurboQuant inject new complexity into the story. For U.S. investors, the stock’s pullback offers a chance to reassess position size and time horizon in what remains a high‑beta way to play the AI infrastructure build‑out. The next few quarters will be crucial in proving that this is a structural shift in memory economics, not just another cycle, and Micron is still one of the most important names to watch on that front.