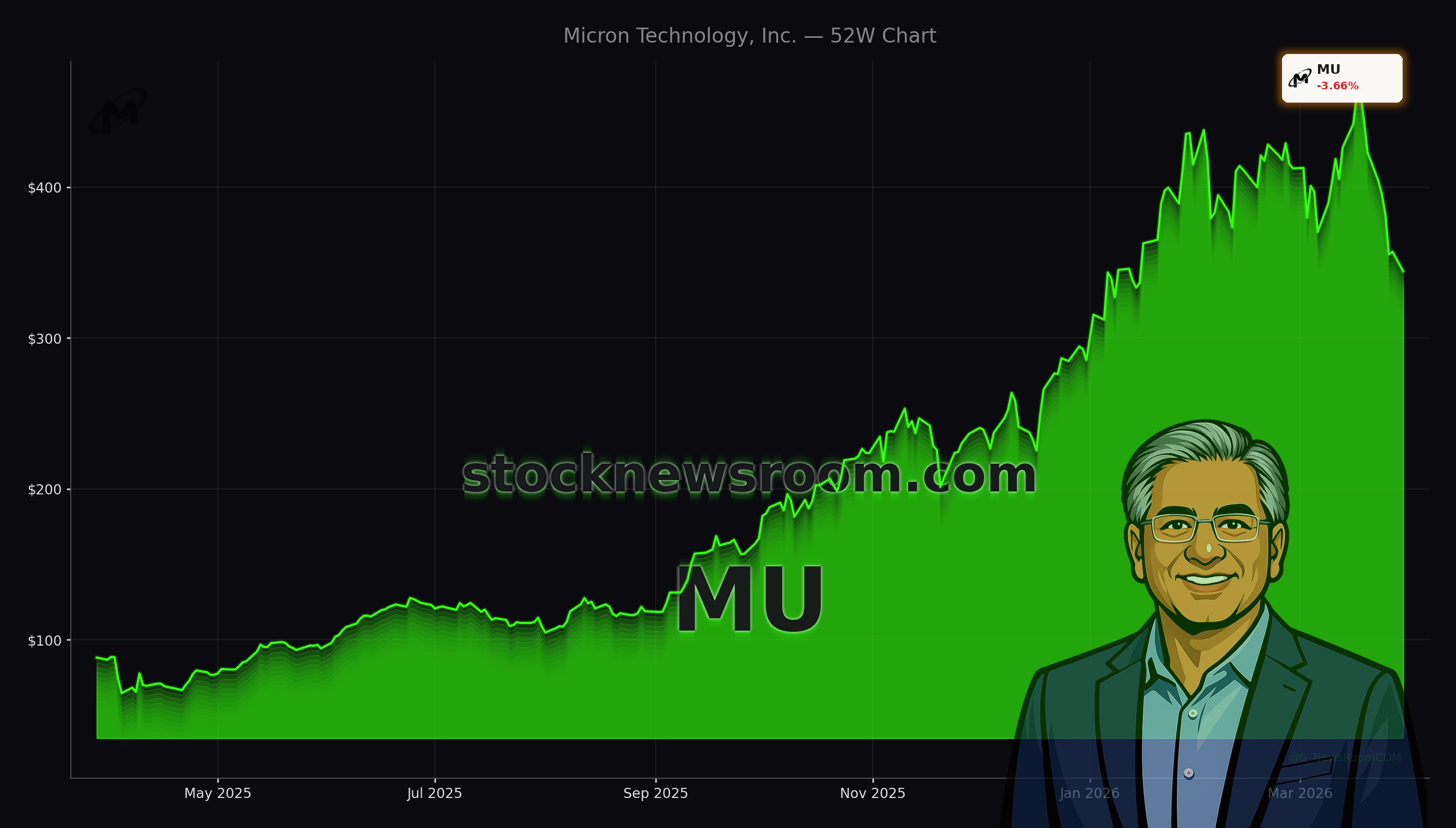

Is the Micron Technology AI Memory Boom already cracking under TurboQuant’s shock, or is this sell-off a rare entry point?

How hard did the TurboQuant shock hit Micron?

Micron Technology (MU) has lost more than 15% over the past week and is down about 5.20% today to $338.72, after a brutal sentiment swing triggered by Google’s new TurboQuant algorithm. TurboQuant is designed to compress AI workloads so they use substantially less memory without sacrificing performance. The announcement led to a sector‑wide rout in memory names, with Micron, Western Digital and other producers selling off as investors re‑priced the Micron Technology AI Memory Boom narrative.

The fear is straightforward: if hyperscale AI workloads need fewer gigabytes of DRAM and high‑bandwidth memory (HBM) per model, then peak-cycle demand and pricing power could arrive sooner and end faster than bulls expect. That concern hit Micron especially hard because it has become one of the purest public plays on AI infrastructure, with HBM and data‑center DRAM at the heart of the story.

Yet the move has sharply widened the gap between Micron’s stock price and Wall Street expectations. According to recent data, Micron is trading nearly 47% below the Street’s consensus target of about $527.60, even as order books for premium AI memory stretch into 2027 and calendar‑year 2026 HBM supply is already sold out.

What is driving Micron’s AI memory supercycle?

The core of the Micron Technology AI Memory Boom is HBM – stacked DRAM packaged next to AI accelerators from partners like NVIDIA. Micron is one of only three meaningful HBM suppliers globally and the only major DRAM maker headquartered in the United States, a status that matters for both national security policy and long‑term supply agreements with U.S. cloud and defense customers.

Micron has moved its latest HBM4 generation into mass production for NVIDIA’s Vera Rubin platform, positioning itself squarely in the highest‑value corner of the AI supply chain. Management has said the company’s entire 2026 HBM output, including industry‑leading HBM4, is already booked on both price and volume. Internally, the HBM market is projected to grow at roughly a 40% compound annual rate through 2028 toward a $100 billion opportunity, with Micron aiming to claim outsized share.

Beyond HBM, AI servers also rely heavily on conventional DRAM and NAND flash. Micron’s Cloud Memory Business Unit recently reported gross margins around 66%, a level more commonly associated with top‑tier logic chip designers than historically boom‑bust memory suppliers. Q1 FY2026 revenue reached about $13.64 billion, up 57% year over year, and management has guided for Q2 revenue near $18.7 billion, with further acceleration in Q3 as more AI clusters come online.

That explosive earnings power is why many on Wall Street see today’s volatility as cyclical noise in a structural upcycle, rather than a thesis‑breaking shock to the Micron Technology AI Memory Boom.

Do algorithms like TurboQuant really kill HBM demand?

TurboQuant has been framed by some traders as a “DeepSeek moment” for memory — a technological step change that could permanently reduce capacity needs. But several analysts argue that this view misunderstands how AI infrastructure evolves. History suggests that when compute and memory become more efficient, software developers often respond by training larger models, running more experiments and deploying AI into broader consumer and enterprise workflows.

In that read of the Micron Technology AI Memory Boom, compression is not the end of demand, but a catalyst that expands the overall addressable market. Alphabet’s breakthroughs may let a single AI workload use fewer HBM stacks, but they can also make AI cheaper to deploy at scale, driving more total servers, more inference nodes and ultimately more DRAM and NAND shipped.

Micron’s own fundamentals back this more optimistic interpretation. The company nearly tripled revenue last quarter versus the prior year, set records in revenue, gross margin, earnings per share and free cash flow, and expects to set more records again in the coming quarter. Despite this, Micron trades at around 16 times adjusted earnings and roughly 7 times forward earnings, multiples that look modest for a business growing earnings at a double‑digit rate through at least 2029.

What are Wall Street analysts saying now?

Even after the recent selloff, there has been no wholesale capitulation among major banks. Cantor Fitzgerald analyst C.J. Muse has one of the most aggressive views, maintaining a price target of $700 for Micron, which would imply roughly 98% upside from recent levels near $352 and even more from today’s $338 print. Muse’s thesis rests on sustained AI infrastructure spending, Micron’s U.S. manufacturing advantage and premium HBM mix lifting margins well above prior cycles.

J.P. Morgan has reiterated a $550 target and a Buy rating, arguing that TurboQuant does not materially alter Micron’s medium‑term demand trajectory. The bank highlights that Micron’s forward P/E of about 7x, combined with a PEG ratio near 0.4, implies the market is pricing in a steep earnings collapse that is inconsistent with customer order patterns and the company’s own guidance.

Across the Street, 38 of 43 analysts currently rate Micron Buy or Strong Buy, with just three Holds and two Sells. The median target price of roughly $550 suggests around 56% upside from the pre‑selloff level of $352, and an even wider gap from today’s intraday quote. Some institutional investors have taken profits — for example, Net Worth Advisory Group cut its Micron stake by over 70% in Q4 — but overall institutional ownership remains above 80%, signaling that most long‑term holders are staying put.

How does Micron stack up against Samsung and SK Hynix?

From a U.S. investor’s perspective, a key attraction of Micron in the Micron Technology AI Memory Boom is that it offers direct, liquid exposure to AI memory without the political and governance risks of owning South Korean peers. Samsung and SK Hynix are formidable opponents with scale advantages and deep pockets, but they are less accessible to many U.S. retail investors and are more exposed to regional geopolitical tensions.

Micron, meanwhile, has secured a central role in U.S. semiconductor policy and CHIPS Act support as the only domestic DRAM producer of scale. That can translate into tax incentives, grants and long‑dated supply commitments from U.S. cloud players and the defense sector. The company is also emerging as a key supplier of memory chips for next‑generation devices like AI PCs, AI‑optimized smartphones, smart glasses and even humanoid robots, where compute and memory footprints are expected to surge.

Compared to diversified mega‑caps like Apple or Tesla, Micron gives more concentrated exposure to the picks‑and‑shovels layer of the AI buildout, similar to how investors use NVIDIA for accelerators rather than consumer end‑markets. That concentration amplifies volatility, but also gives Micron more direct leverage to any upside surprise in AI capital expenditure.

Is valuation signaling a trap or a rare entry?

With Micron’s stock up close to 292% over the past 12 months but now more than 20% below its 52‑week high of about $471, investors are split on whether the risk/reward still favors new money. On the bear side, memory remains deeply cyclical. Micron plans to spend around $25 billion in capex in fiscal 2026, and a sudden air pocket in AI demand or a mis‑timed capacity build‑out could crush returns. Rapid algorithmic efficiency gains beyond TurboQuant could also flatten the slope of the Micron Technology AI Memory Boom faster than consensus expects, making today’s peak earnings harder to sustain.

On the bull side, every key operational metric is moving in the right direction: revenue is ramping from roughly $8 billion in Q2 FY2025 to nearly $24 billion a year later, gross margins are guided to 67% next quarter, and the 2026 HBM book is already closed. The S&P 500’s technology leaders outside the mega‑cap “Magnificent Seven” rarely trade at 7x forward earnings while posting record profit growth, yet Micron now ranks among the largest non‑megacap tech positions in the index.

For diversified U.S. portfolios already owning broad NASDAQ or S&P 500 ETFs, a modest, targeted Micron position can function as a satellite bet on the depth and duration of AI data‑center spending. Investors more heavily exposed to cyclical semiconductors, including equipment makers and memory peers, may want to size positions conservatively given the combined volatility.

Related Coverage

Investors who want a deeper dive into whether HBM4 economics are sustainable should read “Micron HBM4 Launch Boom: Are AI Margins at Record Peak?”, which analyzes how tightening supply and premium AI pricing could shape Micron’s profitability in coming quarters. For a broader look at AI risks beyond semiconductors, including regulatory and legal overhangs on big‑tech platforms, the article “Meta AI Regulation Warning: Court Losses And AI Shock” explores how rising compliance costs and court setbacks might impact AI investment cycles at companies like Meta and other platforms.

We expect significant records again in the coming quarter as AI demand, tight supply and strong execution continue to drive Micron’s growth.— Sanjay Mehrotra, CEO of Micron Technology

The Micron Technology AI Memory Boom remains intact for now: demand for HBM4 and data‑center DRAM is overwhelming supply, margins are at record levels and long‑term contracts extend well into 2027. For U.S. investors, the key is whether Google’s TurboQuant and similar efficiency gains mark a cyclical scare or an early signal that the AI buildout will mature faster than expected. The next few quarters of orders, capex commentary and pricing trends will show which story wins out, but for now Micron continues to look like a high‑beta way to express a long‑term conviction in AI infrastructure spending.