Can NVIDIA AI Factories and a revived China pipeline really unlock the next leg of growth for a stock already priced for perfection?

How do NVIDIA AI Factories change the power equation?

At CERAWeek in Houston, NVIDIA and Emerald AI unveiled a partnership with AES, Constellation, Invenergy, NextEra Energy, Nscale Energy & Power and Vistra to develop a new generation of grid-aware AI data centers branded as NVIDIA AI Factories. These sites will use the Vera Rubin DSX AI Factory reference design plus the DSX Flex software layer, allowing operators to modulate power consumption in real time and even send electricity back to the grid during peak stress.

Management argues that today’s U.S. power system is built around rare peak-demand hours, leaving large amounts of capacity underutilized most of the year. By turning AI factories into “flexible energy assets” rather than passive loads, NVIDIA claims the approach could unlock up to 100 gigawatts of latent capacity without waiting on years-long transmission upgrades. Emerald AI’s Conductor platform will orchestrate AI workloads, on-site generation and batteries to hit power targets while maintaining service levels for compute tenants.

For investors, the logic is straightforward: every megawatt that can be brought online faster is another rack of GPUs and Grace CPUs that can be sold sooner. With grid interconnection queues delaying many data center projects by years, NVIDIA AI Factories offer a path to accelerate revenue recognition from its Vera Rubin and Blackwell architectures.

What does the China H200 restart really mean for NVIDIA?

On the geopolitical side, CEO Jensen Huang confirmed that the company has restarted manufacturing of its H200 processors for sale into China after a multi-quarter hiatus tied to U.S. export controls. NVIDIA has already received purchase orders and is ramping supply, although Huang stopped short of quantifying expected revenue.

The H200 sits above the older H20 China-limited chip but below NVIDIA’s latest Blackwell-class products that remain restricted. Huang has previously sized China’s AI infrastructure opportunity at about $50 billion annually, growing 50% per year. KeyBanc analyst John Vinh estimates that, under permissive licensing, Chinese customers could buy roughly 1.5 million H200 units in 2026, equating to around $30 billion of potential revenue. Even if actual volumes land well below that, any normalized flow would be additive to Wall Street models that largely discounted China.

The risk is competition from domestic champion Huawei, which just launched its Atlas 350 inference accelerator and is touting nearly triple the compute performance of NVIDIA’s H20 in some configurations. Benchmarks remain hard to compare, and Huawei has historically lagged badly on power efficiency, but a credible homegrown alternative could cap NVIDIA’s share in a strategically important market.

Why is the stock stuck despite trillion-dollar AI headlines?

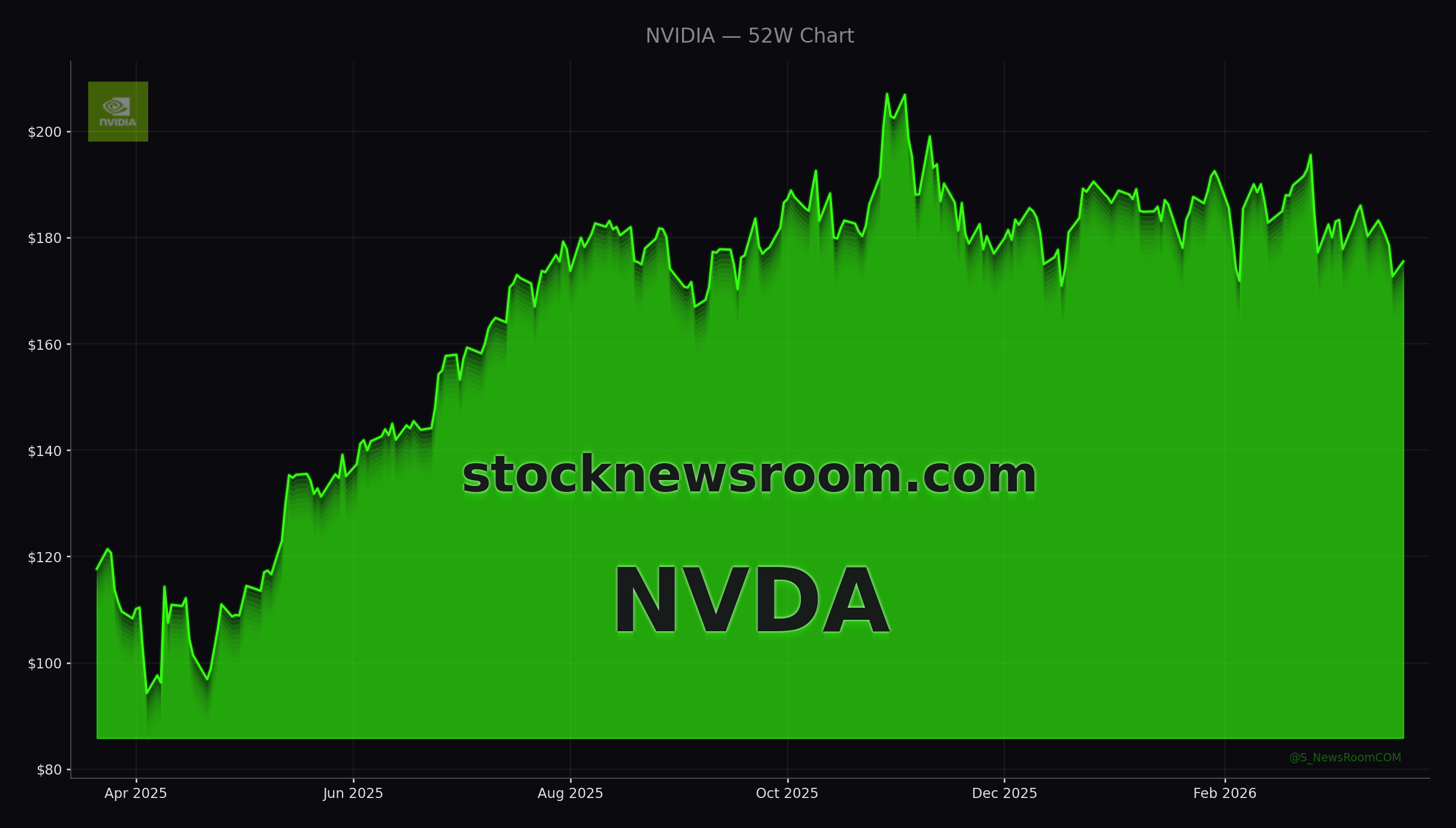

NVDA shares traded at $175.38 at Monday’s close, up 1.42% on the day but still well below their 52-week high of $212.19 and roughly flat over the past several months. The stock has even dipped below its 200-day moving average in recent sessions, undermining the high-momentum narrative that carried it through 2023–2024.

That muted price action contrasts with NVIDIA’s aggressive guidance. At its recent GTC event, Huang outlined more than $1 trillion in expected AI chip revenue from the Blackwell and Rubin platforms alone between 2025 and 2027. Deepwater Asset Management’s Gene Munster now models closer to 40% year-over-year growth for 2027 versus prior consensus near 30%, arguing that “expectations for CY27 are still too low.” Yet NVDA fell around 3% in the three days following GTC, suggesting investors are increasingly focused on what happens after 2027.

Sell-side sentiment remains overwhelmingly positive. Wolfe Research reiterated an “Outperform” rating and a $275 price target after GTC, citing 14%–17% upside to consensus data center forecasts through FY28. Bernstein also maintains an “Outperform” stance, highlighting NVIDIA alongside Broadcom as core beneficiaries of the ongoing AI capex wave. Zacks currently flags NVDA in the buy range, with 10 upward EPS revisions for the April 2026 quarter over the past month.

How do NVIDIA AI Factories fit into the broader AI ecosystem?

Wall Street is increasingly treating NVIDIA as the bellwether for the entire AI trade. The company already commands about 95% of the data center GPU market and sits at the center of the “Magnificent Seven” mega-cap tech cohort that drives a third of the S&P 500’s value. Big Tech platforms like Apple, Microsoft, Amazon and Meta are expected to pour roughly $700 billion into AI data center hardware this year, much of it anchored around NVIDIA’s chips and CUDA software stack.

NVIDIA AI Factories address the next constraint in that buildout: power and time-to-grid. By pre-pairing compute campuses with co-located generation and storage, then transitioning them into full grid participants, the design aims to compress deployment timelines that currently threaten to slow GPU shipments. That is critical as the AI cycle shifts from training large models to running “agentic” and physical AI at scale, from humanoid robots to autonomous systems—areas where analysts like Munster see additional multi-decade growth potential.

On the risk side, rivals are not standing still. Advanced Micro Devices is pushing hard into data center GPUs, while companies like Tesla are investing in in-house AI silicon and vertically integrated energy-campus concepts that echo parts of NVIDIA’s vision. If hyperscalers or major customers succeed in replacing significant portions of their NVIDIA stack with custom chips, the growth curve could flatten faster than bulls expect.

Related Coverage

For a deeper dive into how GTC reset expectations, including the $1 trillion sales ambition and what it implies for valuation, see NVIDIA GTC Strategy: $1 Trillion AI Boom Warning. Investors looking beyond NVIDIA to other AI beneficiaries can also review our sector piece on Palantir’s sharp move higher, Palantir Valuation +5.7% Rally: Strategic Utility or Bubble?, which explores whether AI enthusiasm is still grounded in fundamentals or already flirting with bubble territory.

“AI factories are the engines of the intelligence era, and like any great engine, every system must be designed together — energy, compute, networking and cooling as one architecture.”— Jensen Huang, NVIDIA CEO

In sum, NVIDIA AI Factories and the cautious H200 return to China strengthen the company’s long-term AI infrastructure story even as the share price churns. For U.S. investors, the combination of power partnerships, renewed China optionality and still-bullish analyst targets keeps NVDA at the core of any high-conviction AI portfolio. The next few quarters of orders and execution will show whether these initiatives translate into the sustained growth that the market is increasingly demanding.