Is NVIDIA AI Infrastructure still a buy now that the stock looks cheap again despite an unprecedented AI data-center boom?

Is NVIDIA really cheap after the AI surge?

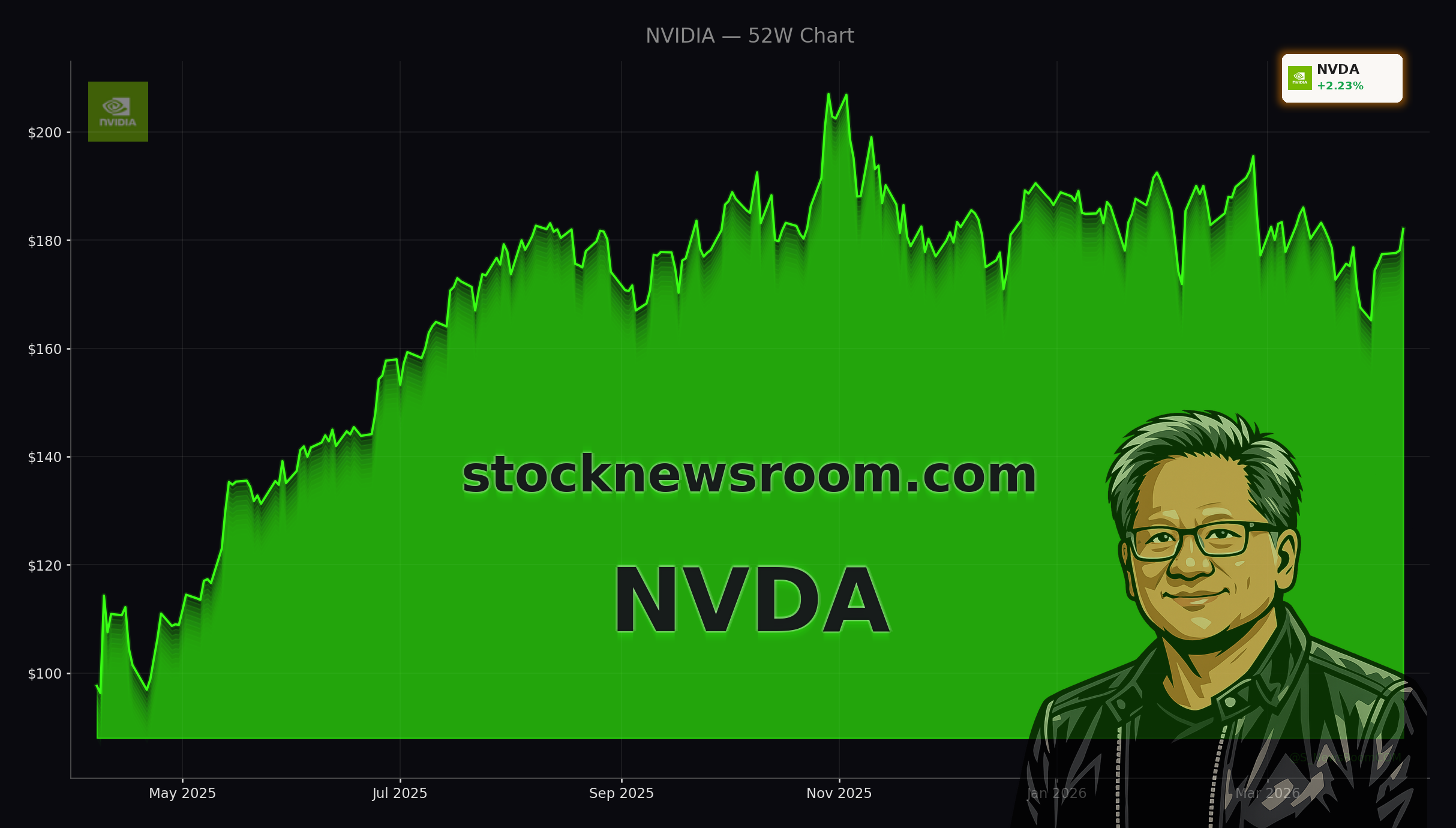

Shares of NVIDIA Corporation finished regular trading at $182.08 on Wednesday, up about 2.2% on the day but slightly softer in after-hours action around $181.05. The move leaves the stock roughly 14% below its recent peak, even though analysts still expect earnings to compound close to 40% annually over the next three to five years. On a forward price-to-earnings multiple near 21–22, NVIDIA now trades at a discount to the S&P 500 despite being the clear leader in AI accelerators and system-level platforms.

For context, Wall Street had routinely been willing to pay about 40x forward earnings for NVDA over the past year as AI enthusiasm peaked. Multiple compression, together with continued earnings expansion, now leaves the stock at its lowest forward valuation in roughly a year, according to consensus estimates compiled by major banks. That is why some portfolio managers describe the current phase as a valuation correction, not a fundamental one.

At the same time, traders who had bet against the name are backing off. A recent technical short setup that had been up more than 15% has fizzled, with the position now around breakeven and technical signals turning neutral. For active investors, capital tied up in fading momentum could be better deployed elsewhere in the semiconductor complex while the core NVIDIA AI Infrastructure thesis remains intact.

How dominant is NVIDIA AI Infrastructure today?

The broader AI sector is in the middle of a super-cycle. Hyperscalers are on track to invest more than $200 billion in AI data centers and related compute capacity, and NVIDIA Corporation is currently capturing an estimated 80% market share in AI accelerators. Bank of America, in a recent semiconductor outlook, lifted its 2026 chip revenue forecast to $1.3 trillion and highlighted NVIDIA, Broadcom, Marvell, and AMD as key drivers of AI compute growth. BofA also projects the overall chip market could reach $2 trillion by 2030, implying a roughly 20% CAGR over the rest of the decade.

Within that landscape, NVIDIA AI Infrastructure has evolved well beyond stand-alone GPUs. The company now sells full systems: Blackwell-based servers, high-speed networking, CUDA software, and increasingly, end-to-end data-center blueprints that hyperscalers can replicate at massive scale. CEO Jensen Huang has described this as nothing less than an industrial revolution, with AI factories replacing traditional data centers. Analysts point to long-dated supply agreements for high-bandwidth memory (HBM) and next-gen platforms like Vera Rubin as evidence that the spending wave is still in early innings.

Even outside Earth’s atmosphere, NVIDIA AI Infrastructure is starting to matter. Partners such as Planet Labs and Firefly Aerospace are deploying Jetson edge modules to process imagery directly in orbit, sending only actionable insights back to the ground. While today that revenue is negligible versus NVIDIA’s core data-center business, it illustrates how the company’s hardware and CUDA software stack are becoming the default platform wherever AI inference is needed.

What about competition from AMD, TSMC and others?

Competitors are not standing still. Advanced Micro Devices is ramping its own AI accelerators and has been highlighted alongside NVIDIA in Bank of America’s AI compute basket. Taiwan Semiconductor Manufacturing remains a critical foundry for both companies, while memory specialist Micron has already begun volume shipments of HBM4 targeted at NVIDIA’s Vera Rubin architecture. This broader ecosystem underscores how central NVIDIA AI Infrastructure has become: when NVIDIA moves to a new platform, suppliers from DRAM to packaging often see multi-year demand visibility.

In equity markets, however, some peers have dramatically outperformed over the past year. Micron, for example, has surged as HBM demand exploded, even as NVDA’s stock has traded mostly sideways for nine months, consolidating after its earlier AI rally. Risk-on sentiment following geopolitical de-escalation, including an Iran ceasefire, has recently lifted leading chip names across the board – with NVIDIA, AMD, Broadcom and Micron all catching a bid – but the valuation gap between NVIDIA and some faster-moving memory names remains notable.

Still, many Wall Street strategists argue that NVIDIA’s moat is wider. The CUDA ecosystem, years of software optimization, and a deep installed base inside hyperscale clouds create switching costs that newer entrants struggle to overcome. While Google, for instance, pushes its custom Tensor Processing Units, it still offers NVIDIA GPUs side by side in Google Cloud. For AI agents, vertical models, and emerging workloads, those GPUs remain a default choice.

How are analysts positioning NVIDIA now?

Despite the recent consolidation in the share price, major research houses continue to frame NVIDIA as a core AI holding. Bank of America’s Vivek Arya has repeatedly named NVIDIA among the primary beneficiaries of the AI compute boom. Other Wall Street firms, including Morgan Stanley and Goldman Sachs, have pointed to the stock’s compressed P/E ratio relative to its growth outlook as an attractive setup, even if some recommend selectively rotating into under-owned AI beneficiaries for tactical outperformance.

At the same time, some growth-focused strategists have reduced exposure, arguing that sentiment around mega-cap tech is fragile and that other AI names may outperform in the near term. Yet even many of those more cautious voices concede that the fundamental story of NVIDIA AI Infrastructure – from the $1 trillion order book for Blackwell and Rubin to sovereign AI projects in Europe and the Middle East – has not materially changed.

Related Coverage: For a deeper dive into how Blackwell and next-gen data centers could reshape earnings, readers can explore NVIDIA AI Strategy Boom: Inside the $1T Data Center Shock, which analyzes whether the order book can sustain current growth rates or sets up the risk of disappointment. Investors interested in how AI spending is disrupting adjacent fintech and payment platforms can also read Block AI Restructuring: 40% Job Cuts Fuel SQ Rally, where aggressive cost cuts and AI-driven product shifts at Block are discussed as a template for how non-chip companies may respond to the AI wave.

In summary, NVIDIA AI Infrastructure remains the backbone of the global AI buildout, yet the stock now trades at one of its lowest forward valuations in years. For U.S. and international investors, that combination of discounted multiple, dominant market share and a multi-year, trillion-dollar order pipeline could be compelling. The next earnings season and any updates on Blackwell and Vera Rubin deployments will be critical in determining whether NVIDIA finally breaks out of its sideways range and reclaims leadership among the market’s AI heavyweights, alongside peers like Apple and Tesla.