Can the NVIDIA AI Strategy built on $18 billion of strategic bets keep its data center dominance as hyperscalers go custom?

How is NVIDIA reshaping its AI roadmap?

The core of the current NVIDIA AI Strategy is to move from a pure GPU vendor to an end‑to‑end AI infrastructure platform. Data center revenue recently surged to about $62 billion annually, and GPUs still command roughly 86% of the AI data center processor market. But hyperscalers like Apple, Alphabet, Amazon and Meta are building custom chips for AI inference and training, narrowing their dependency on off‑the‑shelf NVIDIA hardware.

Instead of fighting that shift head‑on, NVIDIA is buying its way into every critical layer of the stack. Over just a few months, the company has committed at least $18 billion to public‑market strategic stakes, plus multibillion‑dollar private deals tied to long‑term chip and data center capacity. The strategy mirrors NVIDIA’s 2019 Mellanox acquisition, which quietly became a cornerstone of its data center dominance by bundling high‑speed networking tightly with its GPUs.

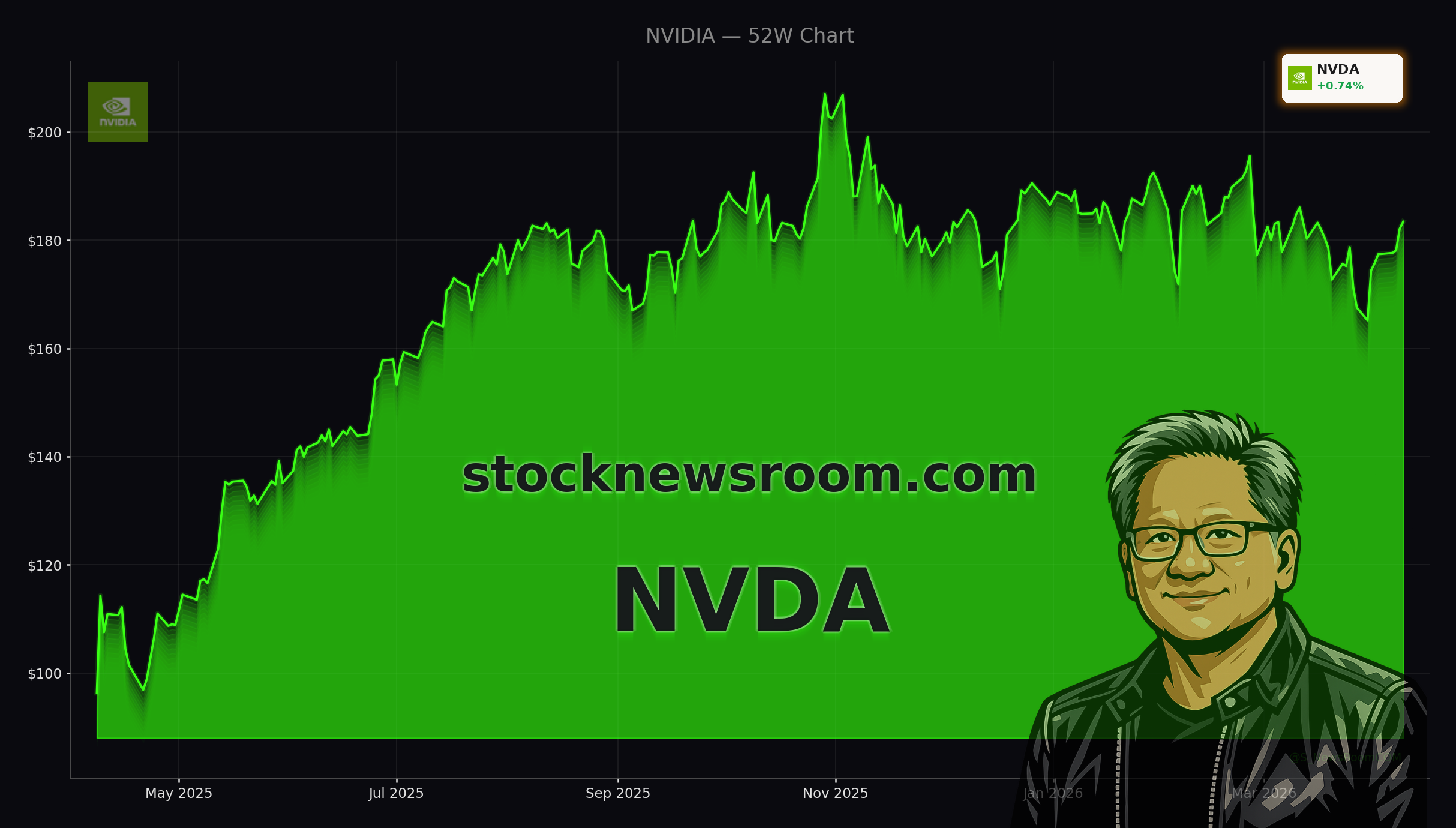

Technically, the stock has been trapped for months in a $165–$195 range, but it is now on a seven‑day winning streak and flirting with the key $185 breakout level that many traders view as a gateway back toward $200. Yahoo Finance data show NVDA still sits about 12% below its 52‑week high, leaving room for upside if investors regain conviction in the AI story.

What do the Marvell and photonics bets signal?

One pillar of the NVIDIA AI Strategy is a $2 billion investment and deep partnership with Marvell Technology. Marvell’s semi‑custom chips and optical interconnects plug into NVIDIA’s NVLink Fusion platform, targeting the biggest bottlenecks in AI data centers: memory bandwidth, interconnect speed and power efficiency. Morgan Stanley expects custom accelerators overall to rise from roughly 12% of the accelerator market today to 24% by 2030, and NVIDIA is positioning itself to benefit rather than be displaced.

The Marvell deal is complemented by $2 billion investments each in Lumentum and Coherent, both photonics players that are scaling optical connectivity and silicon photonics for next‑generation AI fabrics. MarketBeat reports that Lumentum’s stock has rallied toward record highs as buy‑side firms like Jackson Square Capital ramp positions, citing NVIDIA’s backing as validation of its AI exposure. These photonics stakes give NVIDIA early access to enabling technologies for its Blackwell and Vera Rubin platforms, which are heavily dependent on high‑bandwidth memory and advanced optical links.

Analysts have taken notice. Barclays recently upgraded Marvell to “Overweight,” highlighting the NVIDIA tie‑up and a record custom AI design pipeline with more than 50 opportunities. For NVDA holders, this supports a thesis that future margin expansion will come less from standalone GPU pricing and more from capturing system‑level economics across networking, optics and custom silicon.

How does NVIDIA respond to custom chips from Amazon and Google?

Competition is intensifying. Amazon’s Trainium and Google’s TPU platforms are purpose‑built for AI inference and select training workloads, often at lower cost than general‑purpose GPUs. Amazon CEO Andy Jassy has openly said that some AWS customers want better “price‑performance” than NVIDIA GPUs can deliver, and noted that Trainium2 is sold out while Trainium3 is nearly fully subscribed. Google meanwhile just expanded a five‑year custom AI chip deal with Broadcom, signaling that the hyperscalers are serious about building a “second lane” of non‑GPU accelerators.

The NVIDIA AI Strategy aims not to block these moves but to remain indispensable around them. NVIDIA has invested heavily in CoreWeave, whose data centers are packed with its GPUs and now host a $21 billion, multi‑year AI capacity agreement with Meta that includes some of the first deployments of Vera Rubin systems. It is also set to invest up to $100 billion alongside a massive chip supply commitment for OpenAI, while exploring paused mega‑projects like the Stargate UK data center when power and regulatory economics improve.

At the CPU layer, NVIDIA took a stake in RISC‑V startup SiFive to help address what its own head of AI infrastructure has called the next bottleneck: CPUs orchestrating agentic AI workloads. A separate $5 billion equity stake in Intel deepens cooperation around “balanced systems,” even as Intel promotes Xeon 6 as an AI‑capable CPU alternative.

Is NVIDIA stock still attractive for U.S. investors?

From a valuation angle, several major firms argue the market is underpricing the NVIDIA AI Strategy. Goldman Sachs has highlighted that the “Magnificent Seven” now trade around 20x forward earnings, a far cry from the dot‑com bubble, and that tech underperformance this year has created a buying window. The Globe and Mail likewise notes that NVDA’s forward P/E in the low‑20s looks compelling given 70%‑plus revenue growth and industry‑leading free cash flow.

UBS’s HOLT cash‑flow model is even more aggressive, suggesting that the stock price could theoretically be several times higher if NVIDIA can sustain its extraordinary cash‑flow returns on investment and asset growth. At the same time, activity in inverse products like the GraniteShares 2x Short NVDA ETF shows that a vocal cohort of traders is betting on volatility and potential downside, especially if the fragile U.S.–Iran cease‑fire breaks or AI spending growth cools.

Near term, technical strategists point to $170 as key support and $185–$200 as resistance; a decisive move above could re‑ignite momentum across the S&P 500 and Nasdaq 100, where NVIDIA remains one of the heaviest weights. With the stock only about 2% higher than Wednesday’s close at $182.05 and still 11.9% below its all‑time high, risk‑tolerant investors are weighing whether the latest investment blitz justifies adding exposure on potential breakout signals.

Related Coverage

Investors who want a deeper dive into valuation can read how rising data center demand and cheaper multiples intersect in NVIDIA AI Infrastructure +2.2%: Cheap Valuation or AI Hype?, which analyzes whether the stock’s recent pullback has created a new entry point. For a sector comparison, Oracle AI Strategy -3.9% Plunge: Boom or Bust Ahead? looks at how a very different AI approach at Oracle stacks up against hardware‑driven models, giving context for how NVIDIA’s capital‑heavy roadmap compares with software‑centric peers.

Compute infrastructure will be the basis for the economy of the future, and we will utilize what we’re building with Nvidia to both create new AI breakthroughs and empower people and businesses with them at scale.— Sam Altman, OpenAI CEO

In sum, the NVIDIA AI Strategy now revolves around owning every critical layer of AI infrastructure, from GPUs and custom silicon to photonics, CPUs and cloud capacity. For U.S. investors, that creates a high‑stakes trade‑off between geopolitical and spending risks on one side and durable AI leadership on the other, with the next breakout or breakdown in NVDA likely to ripple across the entire market.