Can the NVIDIA AI Strategy really sustain trillion‑dollar data center ambitions as rivals, regulation and power constraints close in?

Is NVIDIA still the AI market’s backbone?

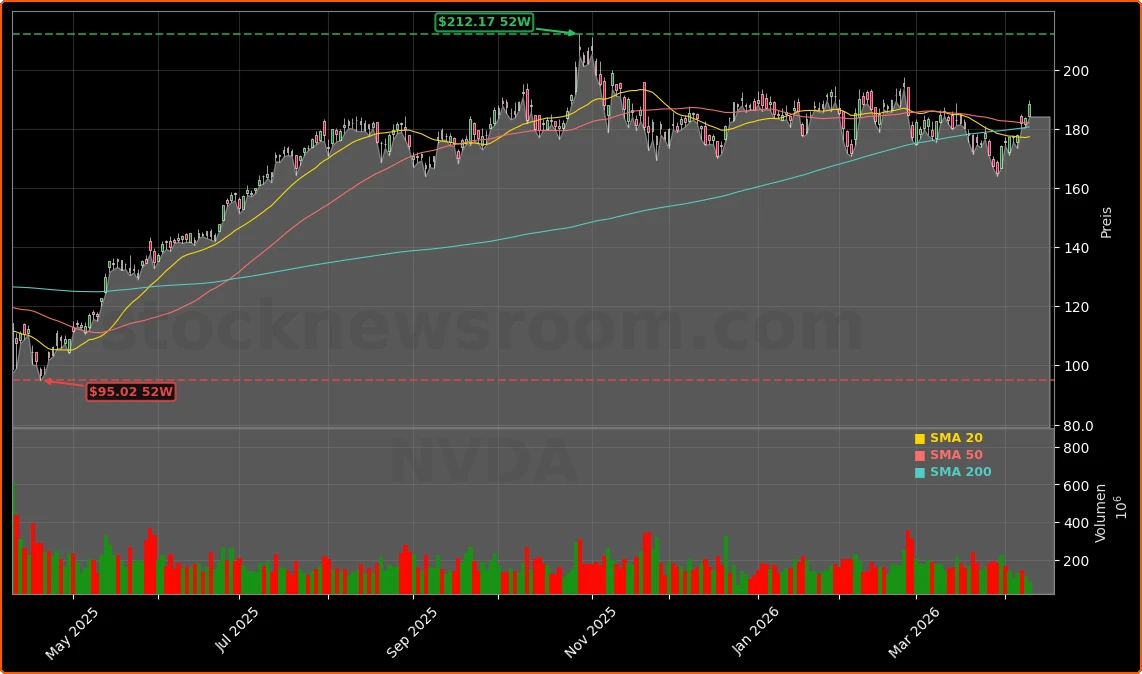

NVIDIA Corporation has added roughly $4 trillion in market value since early 2023 on the back of its domination in AI‑accelerated data centers. Its GPUs power everything from large language model training to inference workloads for hyperscalers and emerging AI clouds. Despite a choppy 2026 for AI stocks, NVDA is only about 0.4% down year‑to‑date and has just logged an eight‑session winning streak, putting it on pace for its longest run of gains since late 2023.

The core of the NVIDIA AI Strategy rests on two pillars: computational leadership with its Blackwell and Vera Rubin architectures, and software lock‑in through the CUDA platform, which has been in development since 2006. CEO Jensen Huang recently reiterated that Blackwell and Rubin together could generate at least $1 trillion in sales through 2027, underscoring how central NVIDIA expects its data center business to remain to the global AI capex boom.

How does NVIDIA AI Strategy lock in data center demand?

NVIDIA’s latest fiscal year (ended Jan. 25, 2026) showed just how powerful that data center engine has become. Data center revenue hit a record $193.7 billion, up 68% year over year, driving total revenue to $216 billion (+65%) and earnings per share to $4.77 (+60%). Wall Street now expects EPS to jump another 74% this year to around $8.29, helped by demand for Blackwell and Rubin systems that can handle both training and inference. Rubin in particular is touted as up to 3.5x faster than Blackwell for training and 5x for inference, making it a prime candidate for AI inference at scale.

Long‑term contracts and ecosystem bets are central to the NVIDIA AI Strategy. Cloud specialist CoreWeave, which relies heavily on NVIDIA hardware, has signed an additional $21 billion capacity deal with Meta on top of $14.2 billion already committed, running through 2032 and focused on inference deployments. In parallel, NVIDIA has forged multi‑gigawatt infrastructure alliances with OpenAI, Anthropic and xAI, giving it multi‑year revenue visibility that many chip peers lack.

Why is NVIDIA spending billions on optics and power?

To sustain its lead, NVIDIA is pushing deeper into the AI supply chain. The company has committed $2 billion to Lumentum in a multi‑year purchase and development agreement for optical components, and has also made a $2 billion strategic investment in Coherent, a vertically integrated photonics supplier. Both moves bet on a future in which high‑speed optics and silicon photonics increasingly replace copper in AI data centers, enabling higher bandwidth and lower power per bit.

On the power side, STMicroelectronics has expanded its 800 VDC conversion portfolio around NVIDIA reference designs, targeting more efficient, higher‑density AI data center power architectures. These hardware and infrastructure partnerships reflect a broader NVIDIA AI Strategy: move beyond standalone GPUs into full‑stack systems that span compute, networking, optics, and power delivery, making the platform harder to displace.

Where does NVIDIA face pushback and new rivals?

Even as the stock remains a hedge‑fund favorite, there are signs of caution. MarketBeat reports that Spirit of America Management Corp NY still holds NVDA as its largest position at roughly $30.9 million, even after trimming its stake by 2% and against a backdrop of more than $215 million in insider selling over the last 90 days. While Wall Street’s consensus rating remains firmly in the “Buy” camp with an average price target around $275, some investors are questioning whether margins can stay near peak levels as competition intensifies.

Hyperscalers such as Amazon, Alphabet and Microsoft are rolling out their own accelerators to reduce dependency on external GPU suppliers. Amazon, for example, is pushing custom chips as a cheaper alternative inside AWS even as it continues to offer NVIDIA clusters for customers who need maximum performance. Meanwhile, challengers are emerging in inference and edge AI: SK Telecom is partnering with Arm and Rebellions to build low‑power inference servers aimed at trimming reliance on NVIDIA GPUs, and Broadcom’s custom accelerators have drawn so much demand that Seaport Research recently downgraded the stock to Neutral, noting industry‑wide supply limits similar to those facing NVIDIA.

How is NVIDIA pushing AI to the edge and beyond?

The NVIDIA AI Strategy is not confined to hyperscale data centers. The company is embedding its platform into telecom networks, factories and even space. In wireless, NVIDIA has teamed up with T‑Mobile and Nokia on AI‑RAN infrastructure that turns 5G networks into distributed high‑performance computing platforms. Vision AI agents can run at the network edge, powered by NVIDIA’s Metropolis Video Search and Summarization Blueprint, enabling low‑latency analytics for autonomous systems and smart city deployments.

In robotics and industrial automation, Texas Instruments has joined forces with NVIDIA to integrate sensor fusion and real‑time control for humanoid robots and AI‑driven industrial systems. And in space, Firefly Aerospace is using NVIDIA hardware for on‑orbit AI processing on its Elytra lunar‑orbit vehicle, a sign that NVIDIA’s compute stack is spreading into high‑margin verticals far from its gaming roots. Even decentralized and privacy‑preserving AI are getting pulled into the orbit: AlphaTON Capital is set to deploy NVIDIA B300 clusters through a $43 million deal with Vertical Data to power confidential compute for partners like Telegram and Animoca Brands.

What does this mean for U.S. investors?

For U.S. portfolios, the key question is whether the NVIDIA AI Strategy can offset growing internal competition at the hyperscalers and the likely erosion of GPU scarcity as TSMC ramps chip‑on‑wafer‑on‑substrate capacity. At about 21x forward earnings, NVDA trades only modestly above the S&P 500 multiple despite earnings growth projected at roughly four times the index. If Huang’s trillion‑dollar data center forecast holds and margins merely normalize rather than collapse, the risk‑reward remains compelling for long‑term investors who can stomach volatility.

Shorter‑term traders see a different angle. With average daily volume above 400 million shares and NVDA frequently the second‑most active name in both the S&P 500 and Nasdaq 100, the stock remains one of Wall Street’s premier trading vehicles. Against that backdrop, any pullback driven by macro worries or rotation out of mega‑cap tech could be used by dollar‑cost‑averaging investors to build or add to positions in this flagship of the AI trade.

Related Coverage

For a deeper dive into how optical investments, custom silicon moves and long‑term capacity deals fit together, readers can explore NVIDIA AI Strategy Boom: $18B Bets to Defend Its Data Center Moat, which breaks down the company’s latest ecosystem bets in detail. On the hyperscaler side, Amazon AI Strategy $200B Boom as AMZN Soars 2.7% looks at how Amazon’s massive capex plans and in‑house chip efforts could both compete with and reinforce demand for NVIDIA GPUs across AWS.

Right where I stand, I see through 2027 at least $1 trillion in combined sales for Blackwell and Vera Rubin.— Jensen Huang, CEO of NVIDIA

In sum, the NVIDIA AI Strategy is evolving from a pure GPU story into a full‑stack infrastructure thesis built on trillion‑dollar data center ambitions, strategic optical and power investments, and a growing footprint at the network edge and in robotics. For investors on Wall Street, that combination keeps NVDA at the center of any serious discussion about AI exposure, with upcoming product cycles and capex budgets likely to determine just how far this AI leader can run.