Will the EU’s crackdown on Meta Child Safety merely dent sentiment or force a costly overhaul of Facebook and Instagram’s growth engine?

How serious is the EU’s case against Meta?

The European Commission’s preliminary view is stark: Facebook and Instagram are not enforcing their own minimum age of 13 and therefore breach the DSA’s child-protection obligations. Investigators say under‑13s can simply enter a false date of birth at signup with virtually no effective verification, and that Meta’s tools to flag minors already on the platforms are hard to find, require up to seven clicks, and often lead to no meaningful follow‑up.

Brussels also criticizes Meta’s risk assessments for allegedly ignoring available research showing younger children are especially vulnerable to online harms, from addictive design patterns to exposure to violence and hate. Around 10–12% of children under 13 in the EU are estimated to use Facebook or Instagram, a figure regulators see as evidence that enforcement of the age limit is largely symbolic.

If these findings are confirmed in a final decision, Meta could face fines of up to 6% of worldwide annual revenue and potentially ongoing penalty payments until it complies. With Wall Street expecting roughly $55–56 billion in Q1 revenue and about 30–31% top-line growth, the theoretical maximum penalty has become large enough to matter for valuation models, even if investors still see it as a tail risk.

What does Meta say about Meta Child Safety?

Meta rejects the Commission’s preliminary conclusions and insists it already invests heavily in child protection. A company spokesperson says Facebook and Instagram are clearly designated for users 13 and older, that the firm deploys technology to detect and remove underage accounts, and that new measures will be announced in the coming days. Meta also frames age verification as an industry‑wide challenge that needs common solutions, rather than a problem any single platform can fully solve alone.

At the same time, the EU is pointing toward its own age‑verification app as a possible benchmark for future compliance. While that app is not yet available across all member states and has itself drawn criticism over security gaps, Brussels wants platforms like Meta, TikTok and Snapchat to adopt either this tool or an equivalent, privacy‑preserving alternative. That could force Meta Child Safety upgrades that involve material engineering work, particularly if they must be harmonized across Europe’s fragmented regulatory landscape.

For investors, the open question is whether Meta can redesign onboarding, reporting flows and risk models in a way that satisfies EU watchdogs without undermining engagement in a key advertising region. With nearly 3.6 billion daily users across its “Family of Apps”, even small changes to friction at signup or in recommendation algorithms can have measurable revenue effects.

How does this intersect with Meta’s AI and capex story?

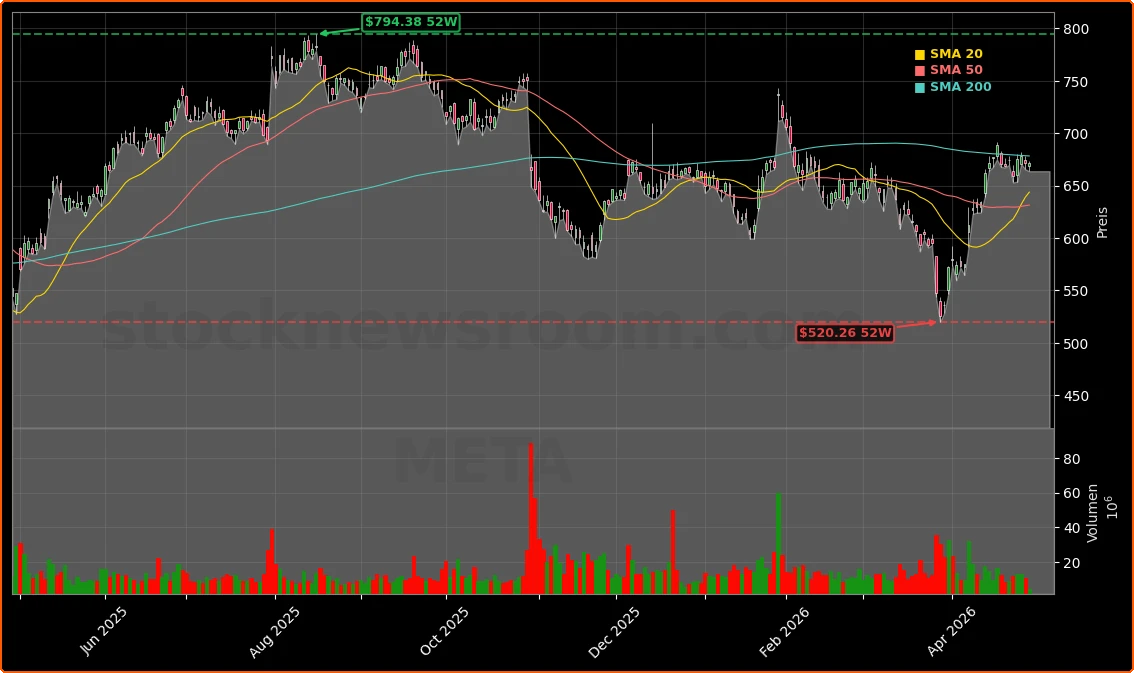

The EU action lands on a crucial day for the stock, with Meta set to report Q1 2026 earnings after the U.S. close. Wall Street is looking for about 31% revenue growth to roughly $55.5 billion and EPS around $6.6–6.7, driven by AI‑boosted ad targeting and strong engagement across Facebook, Instagram and WhatsApp. Yet the shares, at about $671, are only modestly higher year to date and sit roughly 18% below their all‑time high, reflecting skepticism over massive AI capex and ongoing losses at Reality Labs.

Meta has signaled capital expenditures could climb toward $160 billion or more this year as it builds out AI infrastructure, including data centers supplied by partners like Corning and cloud providers such as Amazon Web Services, which recently inked a multi‑year chip deal with the company. Analysts at firms like Morningstar highlight that this hyperscale spending spree is a defining theme across Big Tech and that any sign of capex moderation or improved returns could be a key catalyst for the stock.

But rising regulatory costs now threaten to pull in the opposite direction. If Meta Child Safety requirements force new age‑verification systems, content‑classification models and moderation workflows, some portion of AI investment may need to be redirected from growth features and monetization toward compliance and risk mitigation. That could weigh on near‑term margins even if it reinforces the long‑term moat against smaller social platforms that lack the resources to keep up.

How are rivals and the S&P 500 exposed?

The DSA’s child‑protection rules apply across the board, so the Meta Child Safety probe is a warning shot for other large platforms. Short‑video rival TikTok and messaging‑heavy Snapchat face similar questions about how effectively they keep under‑13s out and protect teenagers from harmful content. For U.S. investors holding diversified Big Tech exposure via the S&P 500, the risk is that the EU sets a strict enforcement blueprint that later extends to other players, increasing compliance costs sector‑wide.

Within the so‑called “Magnificent Seven,” Meta shares the regulatory spotlight with Apple, NVIDIA and Tesla in different domains, but its exposure is uniquely tied to social media and advertising. Communication services now make up about 10% of the S&P 500, and much of that weight comes from Meta and Alphabet. Any hit to Meta’s growth or margin narrative could therefore ripple through major index ETFs and AI‑themed funds that also hold heavy positions in cloud and chip names.

For now, most Wall Street research desks, including those at large banks like Goldman Sachs and Morgan Stanley, continue to emphasize Meta’s strong ad momentum and AI‑driven productivity gains while flagging regulation as a rising but manageable risk. The earnings call is expected to feature questions on both EU compliance timelines and how far Meta is willing to go in redesigning its products for under‑18s.

Related Coverage

Investors looking for a deeper dive into how spending and layoffs interact with regulation can read “Meta Earnings +2.4% Rally: Can Record AI Capex Pay Off?”, which examines whether the company’s unprecedented AI budget and recent job cuts can drive sustainable profit growth. That analysis also highlights how much room Meta has to absorb regulatory shocks like the current EU scrutiny while still funding long‑dated bets in Reality Labs and AI assistants.

Meta Child Safety is now a genuine valuation variable for Meta Platforms, Inc., adding regulatory and reputational risk on top of already heavy AI spending and cyclical ad exposure. For long‑term shareholders, the key will be whether Meta can turn stricter safeguards for children into a durable advantage rather than a drag on innovation. The coming quarters, starting with tonight’s earnings and Meta’s formal response to Brussels, will show if management can balance growth, compliance and capital discipline in a way that keeps the stock attractive for U.S. portfolios.