Is Micron HBM quietly becoming the most critical choke point in the entire AI hardware boom?

Is Micron HBM now the key AI bottleneck?

There is effectively no large‑scale AI without fast memory, and that is precisely where Micron HBM comes in. GPUs can only deliver peak performance if they are continuously fed with data at extremely high bandwidth; otherwise, AI models stall while waiting on memory. High‑bandwidth memory has become the scarce ingredient in this stack, and Micron has emerged as one of the few global suppliers able to deliver it at scale.

The company’s current flagship, HBM3E, leads the industry with roughly 50% more capacity and about 20% lower energy use than rival solutions, putting it in high demand for data center accelerators. Micron is now ramping its next generation, HBM4, which is designed to boost capacity by about 60% over HBM3E while improving energy efficiency by 30%. That step‑change helps hyperscalers lower power bills and pack more AI compute into the same rack footprint.

Micron’s tight integration with leading AI chipmakers is central to the story. Its HBM3E has already been paired successfully with NVIDIA’s Blackwell GPUs, and Nvidia plans to use Micron’s HBM4 alongside its upcoming Vera Rubin platform later this year. As long as AI capex remains elevated, this deep alignment with the dominant accelerator vendor keeps Micron’s position in the AI value chain secure.

How strong is Micron’s HBM‑driven growth?

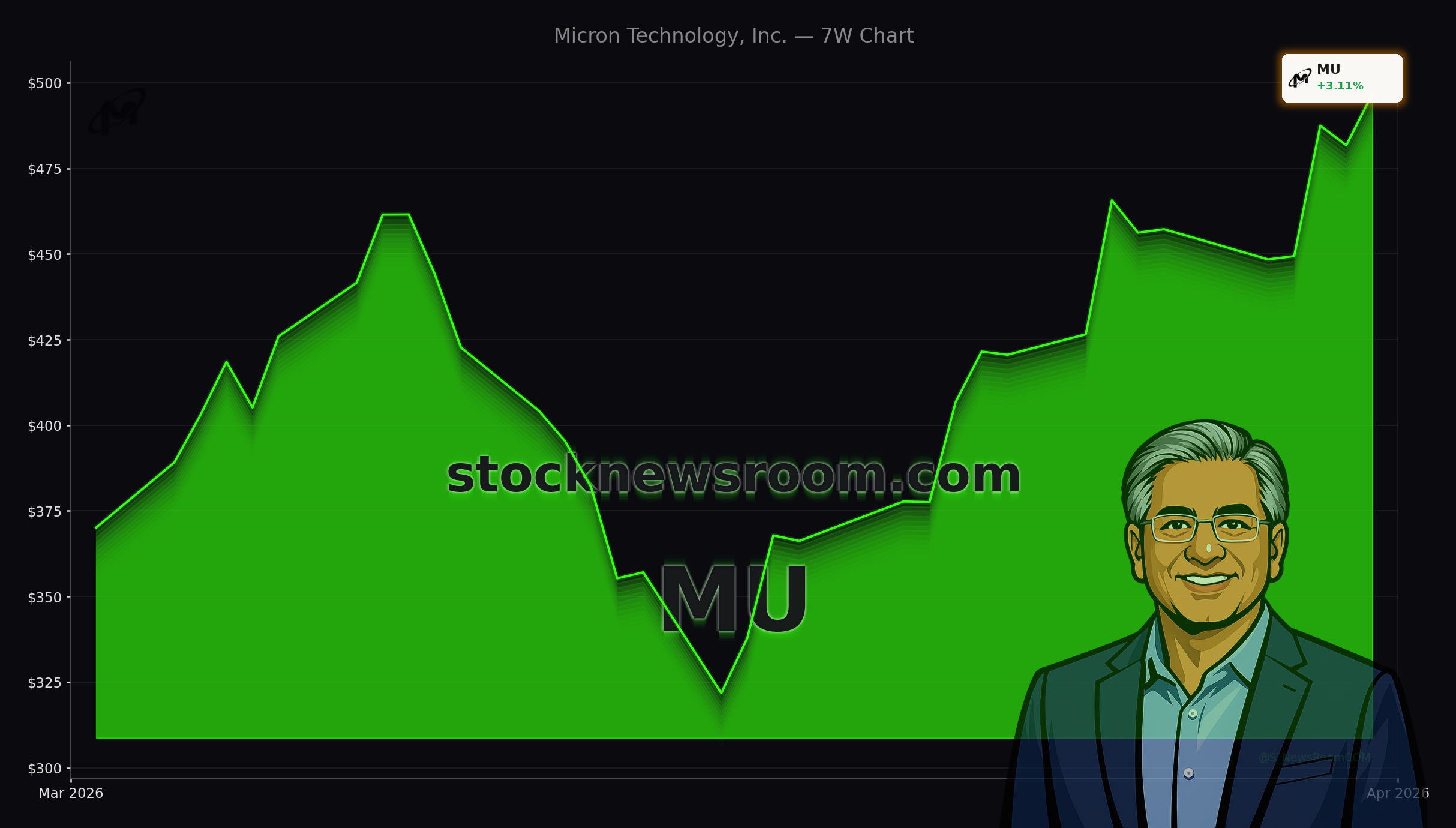

The financial impact of Micron HBM demand is already showing up in the numbers. In fiscal Q2 2026 (ended Feb. 26), total revenue jumped to a record $23.8 billion, up 196% year over year and far ahead of management’s prior outlook around $18.7 billion. Earnings per share soared 756% to $12.07, lifting trailing 12‑month EPS to $21.18 and putting the stock at about 22–23 times earnings at today’s price near $497.

The cloud memory unit, where Micron reports its data center HBM sales, has become the core growth engine. Segment revenue reached $7.7 billion in Q2, up 163% year over year and accelerating from 100% growth in Q1. That makes cloud memory Micron’s largest business line and underscores just how central AI infrastructure has become to the company’s future.

Management’s guidance suggests the momentum is far from over. For the current fiscal Q3 2026, Micron targets around $33.5 billion in revenue, implying about 260% growth versus the prior‑year quarter. With CEO Sanjay Mehrotra stating that all of Micron’s 2026 HBM output — including HBM4 — is already sold out under long‑term contracts, visibility into near‑term demand is unusually strong for a historically cyclical memory producer.

Can Micron keep outpacing other AI chip names?

On Wall Street, Micron is increasingly discussed alongside the largest AI winners. The market cap sits around $560 billion after a roughly 600% share price gain over the past year, and yet the valuation still screens as reasonable. On a trailing basis, Micron trades at a discount to the S&P 500’s P/E in the mid‑20s despite far faster earnings growth and structurally higher margins tied to HBM scarcity.

Recent coverage from 24/7 Wall St. has labeled Micron and Applied Optoelectronics as “AI bottleneck stocks,” citing memory and optics as the two pinch points in the AI build‑out. Quant rankings on major semiconductor indices also place Micron near the top of the group as the Philadelphia Semiconductor Index recently moved through 10,000 for the first time, reflecting broad enthusiasm for AI‑linked chipmakers. At the ETF level, Micron has been a notable contributor to recent 52‑week highs in growth funds focused on large‑cap tech exposure.

Yet not every signal is purely bullish. A Benzinga analysis of chip stocks flagged Micron, Intel, AMD and Texas Instruments as part of a rally that is starting to resemble late‑cycle behavior from the dot‑com era, with price moves outpacing some analysts’ target updates. For U.S. investors, that juxtaposition — very strong fundamentals, but an extremely fast tape — is a reminder to balance optimism with position‑sizing discipline.

How big is the long‑term opportunity for Micron?

Micron estimates that the data center HBM market was worth about $35 billion in 2025 and could approach $100 billion annually by 2028, driven by continued AI deployment and the shift toward more memory‑intensive architectures. Because Micron HBM consumes significantly more wafer capacity than conventional DRAM — often around three times as much — the technology tightens overall supply and supports higher pricing across the memory stack.

That dynamic helps explain Micron’s extraordinary earnings power today. On a forward basis, Wall Street consensus currently sees EPS potentially reaching above $100 by fiscal 2027 if AI demand and pricing hold up, implying a very low forward P/E. However, professional investors also recognize that memory remains cyclical. As Micron, Samsung and SK Hynix all bring more HBM capacity online over the next few years, the current supply‑demand imbalance is likely to ease, pressuring prices and margins.

Against that backdrop, analysts at major U.S. banks have generally maintained positive views on Micron’s strategic position while cautioning about volatility once the current cycle matures. Many investors are opting to build positions gradually, using pullbacks in the broader semiconductor complex as opportunities rather than chasing short‑term spikes.

Related Coverage

For readers who want to dive deeper into how AI demand is transforming Micron’s fundamentals, the article “Micron Earnings +196%: AI Memory Boom Ignites MU Rally” takes a closer look at the company’s latest quarterly report and explores whether the current surge signals a durable AI‑driven supercycle or just another sharp memory upturn.

Micron HBM has clearly moved the stock into the center of the AI infrastructure trade, combining sold‑out capacity, record earnings and deep integration with leading GPU platforms. For long‑term investors in the U.S. and abroad, the stock offers direct exposure to the memory bottleneck behind generative AI, albeit with the familiar risk that future HBM supply could reset today’s lofty margins. The next few quarters of Micron’s HBM4 ramp and contract wins will show whether the company can turn this exceptional cycle into a lasting structural advantage.